Once you’ve identified a project, assemble the Integrated Product Team (IPT) outlined in Chapter 4: Developing the AI workforce to ensure the necessary parties are engaged and dedicated to delivering success. Whether the project is a small pilot or a full-scale engagement, moving through the AI life cycle to go from business problem to AI solution can be hard to manage.

Internal prototype and piloting

Internal or organic prototypes (exploration without vendor support) provide a great way to show value without having to commit the resources required for a production deployment. It allows you to take a subset of the overall integration and tackle one aspect of it to show how wider adoption can happen. This requires technical skills, but can rapidly increase AI’s adoption rate as senior leaders see the value before committing resources.

Prototyping internally can help identify where in the life cycle to seek a vendor. Not all steps require vendor support (single or many). It can also show when and how to best engage a vendor. It could reveal either that you should engage early to turn an internal prototype into a pilot, or that you should develop a pilot before engaging a vendor for full-scale production.

From pilot to production

Once you’ve completed a successful pilot, look to evaluate its effectiveness towards your objectives. If you determine that the pilot proved enough value—with clearly defined and quantified KPIs—that your agency wants a longer term solution, then you should seek to move the pilot to production.

If you need vendor support for scaling, take the key findings from the pilot and translate them into a procurement requirement so a private vendor can take over providing the service. The pilot’s success allows the work already done to serve as the starting point for the requirements document.

Prototypes/pilots intentionally scale the problem down to primarily focus on the data gathering and implementation. When moving to production, you have to consider the entire pipeline. The requirements must feature the ways in which the results from the model will be evaluated, used, and updated.

The three most important items to consider when moving to production are these:

-

Project ownership

What part of the organization will assume responsibility for the product’s daily continuation?

-

Implementation plan

Since the pilot addressed only a small part of the overarching problems, how will you roll out the solution to the whole organization?

-

Sunset evaluations

At what point will the organization no longer need the results coming from the AI solution? Who and how will this be evaluated?

These are important questions to consider, which is why the test and evaluation process is critical for AI projects.

Start building AI capabilities

If there are existing data, analytics, or even AI teams, align them to the identified use cases and the objective of demonstrating mission or business value. If there are no existing teams, your agency may still have personnel with relevant skill sets who haven’t yet been identified. Survey the workforce to find this talent in-house to begin building AI institutional capability.

To complement government personnel, consider bringing in contractor AI talent and/or AI products and services. Especially in the early stages, government AI teams can lean on private-sector AI capabilities to ramp up government AI capability quickly. But you must approach this very carefully to ensure sustainability of robust institutional AI capabilities. For early teams, focus on bringing in contractors with a clear mandate to train government personnel or provide infrastructure services when the AI support element has not yet been stood up.

Buy or build

The commercial marketplace offers a vast array of AI products and services, including some of the most advanced capabilities available. Use the research and innovation of industry and academia to boost government AI capabilities. This can help speed the adoption of AI and also help to train your team on the specific workflows and pipelines needed in the creation of AI capabilities.

Agencies should focus also on building their own sustainable institutional AI capability. This capability shouldn’t overly rely on external parties such as vendors/contractors, who have different incentives due to commercial firms’ profit-seeking nature. Especially with limited AI talent, agencies should strategically acquire the right skills for the right tasks to scale AI within the agency.

Agencies’ ultimate goal should be to create self-service models and shareable capabilities rather than pursuing contractors’ made-to-order solutions. Agencies must weigh the benefits and limitations of building or acquiring the skills and tools needed for an AI implementation. Answering the “buy or build” question depends on the program office’s function and the nature of the commercial offering.

External AI products and services can be broadly grouped into these categories:

-

Software tools that use AI as part of providing another product or service

Examples include email providers that use AI in their spam filters, search engines that use AI to provide more relevant search results, language translation APIs that use AI for natural language understanding, and business intelligence tools that use AI to provide quick analytics.

-

Software tools that help AI practitioners be more effective

Examples include tools for automating data pipelines, labeling data, and analyzing model errors.

-

Open source

Open-source software is used throughout industry and heavily relied on for machine learning, deep learning, AI research, development, testing and ultimately operation. Note, that many of these frameworks and libraries are integrated into many top “proprietary software applications”.

Mission centers and program offices, the heart of why agencies exist in the first place, need to ensure a sustainable institutional AI resource by focusing on building. A team of government AI practitioners dedicated to the mission’s long-term success is necessary for building robust core capabilities for the agency.

Commercial tools that enhance these practitioners’ effectiveness may be worth the cost in the short-term. However, mission centers sometimes have unique requirements that do not exist in a commercial context, which makes commercial-off-the-shelf (COTS) standalone offerings less likely to fit. Even if the agency could find an adequate COTS product, using it would be a major operating risk for an agency’s core functions to rely so much on external parties.

On the other hand, business centers are likely to benefit from AI-enhanced standalone tools. Business centers often focus on efficiency while having requirements most likely to match commercial requirements, so COTS products are more likely to fit. Business centers still need government AI talent, who can evaluate and select the most appropriate commercial AI offerings.

Besides products and services and their associated support personnel, contractors may also offer standalone AI expertise as contractor AI practitioners. These kinds of services are well-suited to limited or temporary use cases, where developing long term or institutional capability is not needed.

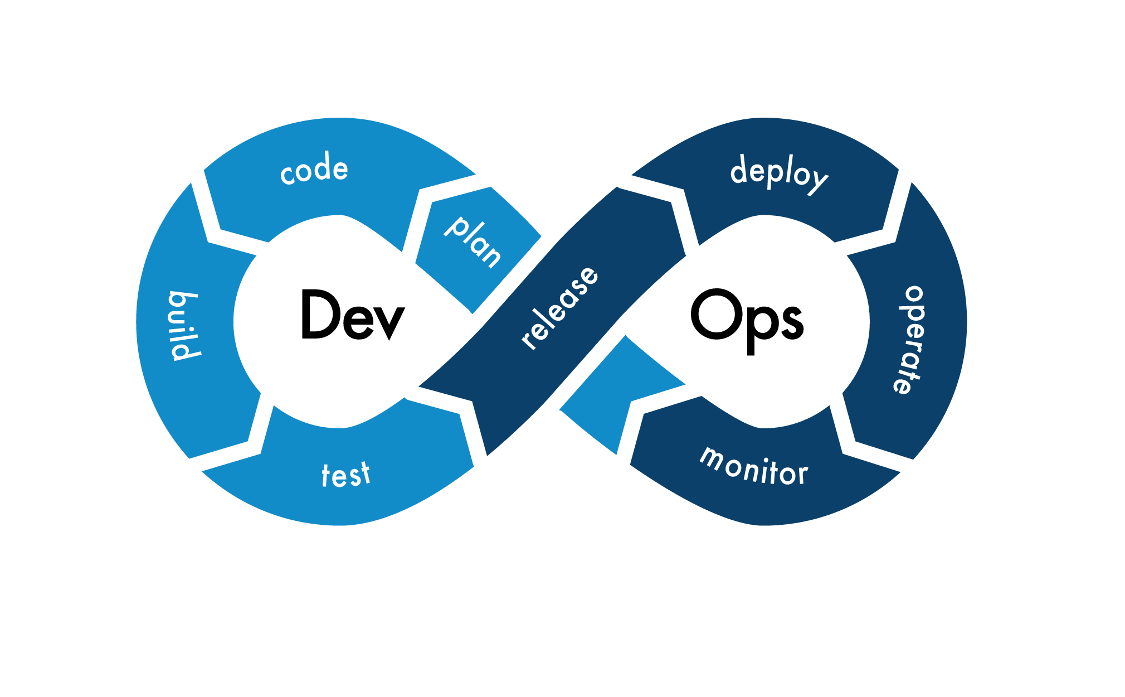

In the early stages of implementing AI in an agency, contractor AI personnel can help train and supplement government AI personnel. But as with commercial AI tools, wholesale outsourcing core capabilities to contractor personnel teams creates major operating risk to an agency’s ability to perform core functions. Think in terms of Infrastructure as Code (IaC), the ability to rapidly provision and manage infrastructure, to design and build out your AI Platform, creating automations and agile pipelines that are conducive for PeopleOps, CloudOps, DevOps, SecOps, DataOps, MLOps and AIOps.

Infrastructure as code (IaC) brings the repeatability, transparency, and testing of modern software development to the management of infrastructure such as networks, load balancers, virtual machines, Kubernetes clusters, and monitoring. The primary goal of IaC is to reduce error, configuration deltas and increase automations, while allowing engineers to spend time on higher value workflows. Another goal would be shareable IaC across the federal government. IaC defines what the end state of your infrastructure needs to be, then builds the resources necessary to achieve and self-heal . Using infrastructure as code also helps standardize cluster configuration and manage add-ons like network policy, maintenance windows, and Identity and Access Management (IAM) for cluster nodes and workloads in support of AI, ML, Data workloads and pipelines.

Acquisition journey

After your agency decides to acquire commercial products or services, consider these practices to increase your odds of success:

- Use a Statement of Objectives (SOO) when you are less certain of a solution’s path and want to consider innovative or unorthodox methods. Consider using a Performance Work Statement (PWS) when you have clear specifications on what the product or service needs to do. A PWS, outside of a SOO, is written incorporating measurable standards that inform the contractor of the government’s desired outcomes. How the contractor achieves those outcomes is up to them. The contractor is thus empowered to use the best commercial practices and its own innovative ideas to achieve the desired results.

- Include technical tests in your solicitation as evaluation criteria. These tests should allow your technical subject-matter experts on your evaluation panel to verify the ability of any suggested approaches to apply to your program’s specific circumstances.

- Data rights and intellectual property clauses aren’t the only ways to ensure a project can move from one team to another. You’ll want to include deliverables like product backlogs and open source repositories with the entire source code along with all necessary artifacts to create technical and process-agnostic solutions. To minimize taxpayer exposure to repetitive buys, ensure at least government usage rights to balance private-sector concerns while maximizing the government’s investments.

- Use retrospectives on the acquisition process to identify key clauses and language that worked and those that caused problems, both in terms of the solicitation and post-award management. Document lessons learned to allow new and inexperienced members of the team to ramp up quickly.

- Share the results of your experiences with your federal colleagues. There is no better way to gain knowledge and improve the experience with implementing AI in a department and agency than working with others who have similar projects. You can join the Federal AI Community of Practice to connect with other government agencies working in AI.

Test and evaluation process

Some agencies in the defense and intelligence community already emphasize testing and evaluating software. Due to the nature of AI development and deployment, all AI projects should be stress tested and evaluated. Very public examples of AI gone wrong show why responsibility principles are a necessary and critical part of the AI landscape. You can address many of these challenges with a dedicated test and evaluation process.

The basic purpose of Test & Evaluation (T&E) is to provide knowledge to manage risk that’s involved in developing, producing, operating, and sustaining systems and capabilities. T&E reveals system capabilities and limitations to improve the system performance, and optimize system use, and sustain operations. T&E provides information on limitations (technical or operational) and Critical Operational Issues (COI) of the system under development to resolve them before production and deployment.

Traditional systems usually undergo two distinct stages of test and evaluation. First is developmental test and evaluation (DT&E), which verifies that a system meets technical performance specifications. DT&E often uses models, simulations, test beds, and prototypes to test components and subsystems, hardware and software integration, and production qualification. Usually, the system developer performs this type of testing.

DT&E usually identifies a number of issues that need fixing. In time, operational test and evaluation (OT&E) follows. At this stage, the system is usually tested under realistic operational conditions and with the operator. This is where we learn about the system’s mission effectiveness, suitability, and survivability.

Some aspects of T&E for AI-enabled systems are quite similar to their analogues in other software-intensive systems. However, there are also several changes in the science and practice of T&E that AI has introduced. Some AI-enabled systems present challenges in what to test, and how and where to test it; all of those are, of course, dependent on the project.

At a high level, however, T&E of AI-enabled systems is part of the continuous DevSecOps cycle and Agile development process. Regardless of the process, the goal of T&E is to provide timely feedback to developers from various levels of the product: on the code level (unit testing), at the integration level (system testing, security and adversarial testing), and at the operator level (user testing).

These assessments include defining requirements and metrics by talking with various stakeholders, designing experiments and tests, and doing analysis and making actionable recommendations to the leadership on overall system performance across its operational envelope.

T&E for Projects

On a project, there are various levels of T&E; each reveals important information about the system under test:

Model T&E

This is the first and simplest part of the test process. In this step, the model is run on the test data and its performance is scored based on metrics identified in the test planning process. Frequently, these metrics are compared against a predetermined benchmark, between developers, or with previous model performance metrics.

The biggest challenge here is that the models tend to arrive to the government as black boxes due to IP considerations. For the most part, when we test physical systems, we know exactly how each part of that system works. However, we don’t know how the models work; that’s why we test. If you want testable models, you have to make that a requirement at the contracting step.

Measuring model performance is not entirely straightforward; here are some questions you might want your metrics to answer:

- How does the model perform on the data? How often does it get things right / wrong? How extreme are the mistakes the model makes?

- What kind of mistakes does the model make? Is there any evidence of model bias?

- Ultimately, does the model tend to do what it’s supposed to (find or identify objects, translate, etc.?)

Integrated System T&E

In this step, you evaluate the model not by itself but as part of the system in which it will operate. In addition to the metrics from model T&E, we look for answers the following questions:

- Does the model performance change on an operationally realistic system? Does the model introduce additional latency or errors?

- What is the model’s computing burden on the system? Is this burden acceptable?

Operational T&E

In this step, we collect more information on how the AI model ultimately affects operations. We do this through measuring:

- Effectiveness (mission accomplishment)

- Suitability (reliability, compatibility, interoperability, human factors)

- Resilience (ability to operate in the presence of threats, ability to recover from threat effects, cyber, adversarial)

Ethical T&E

Depending on the AI solution’s purpose, this step ensures the system does only what it’s supposed to do and doesn’t do what it’s not supposed to do.

Integration of acquired AI tools

To ensure that AI tools, capabilities, and services are not only acquired, but also properly integrated into the wider program’s business goals, consider these practices to increase your chances of success:

- Use a Statement of Objectives (SOO) under the Simplified Acquisition Threshold (SAT) to create a prototype or a proof of concept. Begin to standardize as you move into a pilot. Be prepared to use a Performance Work Statement (PWS) when you have clear specifications on what the product or service needs to do and when you are ready to scale across the agency. As mentioned above in the Acquisition Journey sub-section, consider using a Performance Work Statement (PWS) when you have clear specifications on what the product or service needs to do, as it incorporates measurable standards aligned with desired outcomes. Different phases of AI projects require different types of solicitations and cost structures.

- Include technical tests in your solicitation evaluation criteria. These tests should allow your technical subject-matter experts on your evaluation panel to verify that suggested approaches apply to your program’s specific circumstances.

- Data rights and intellectual property clauses aren’t the only ways to ensure a project can move from one team to another. Deliverables like product backlogs and open source repositories with the complete source code and all necessary artifacts are essential for creating technical and process-agnostic solutions. To minimize taxpayer exposure to repetitive buys, ensure the government maintains usage rights to balance private sector concerns while maximizing the government’s investments.

- Use retrospectives on the acquisition process to identify key clauses and language that worked and language that caused problems, both in terms of the solicitation and post-award management. Lessons learned should be standardized through documentation that will allow new and inexperienced members of the team to ramp up quickly.

- Share the results of your experiences with your federal colleagues. There is no better way to gain knowledge and improve the experience of implementing AI in a department and agency than collaborating with others doing similar projects.